Market Dynamics

Introduction

Datacenter AI servers are specialized high-performance computing systems designed to handle artificial intelligence and machine learning workloads within modern data center environments. These servers integrate advanced GPUs, AI accelerators, high-speed interconnects, and optimized memory architectures to process massive datasets and complex algorithms efficiently. Unlike traditional servers, AI servers are engineered for parallel processing, enabling faster training and inference for deep learning models. As organizations increasingly rely on data-driven decision-making, datacenter AI servers are becoming essential infrastructure components supporting applications such as natural language processing, computer vision, and real-time analytics across diverse industries worldwide.

One of the key factors driving the datacenter AI servers market is the rapid expansion of artificial intelligence adoption across industries. Enterprises are leveraging AI for automation, predictive analytics, customer personalization, and operational optimization, creating strong demand for high-performance computing infrastructure. The surge in big data generation, combined with the need for real-time insights, is pushing organizations to deploy dedicated AI servers. Additionally, cloud service providers are expanding AI-enabled offerings, encouraging businesses to scale AI workloads efficiently. This growing reliance on data-intensive applications continues to accelerate investments in advanced datacenter AI server technologies.

Another major growth driver is the increasing adoption of generative AI, high-performance computing, and advanced analytics workloads. Applications such as large language models, recommendation engines, autonomous systems, and scientific simulations require immense computational power and low-latency processing. Datacenter operators are investing in AI-optimized hardware, including GPUs and custom accelerators, to support these demanding tasks. Furthermore, the rise of hyperscale data centers, edge AI deployments, and digital transformation initiatives is boosting infrastructure upgrades. Continuous innovation in processor architectures, energy-efficient designs, and high-speed networking solutions is further strengthening market growth.

Recent Market JVs and Acquisitions:

A considerable number of strategic alliances, including M&As, JVs, etc., have been performed over the past few years:

- In July 2025, Hewlett Packard Enterprise Company finalized its acquisition of Juniper Networks for $14 billion. This acquisition will enable HPE to expand its offerings in the networking market and support the deployment of high-performance networks that will be essential for the deployment of large-scale AI environments and GPU-based data centers.

- In March 2024, Cisco Systems completed the acquisition of Splunk for $28 billion. This acquisition marks the largest acquisition in the company’s history and will enhance the company’s offerings in the AI and data center infrastructure management market.

- In May 2024, Lenovo announced a partnership with NetApp to launch a new product called NetApp AIPod with Lenovo ThinkSystem Servers, which is a converged infrastructure solution for generative AI workloads. The solution is a combination of Lenovo servers accelerated by NVIDIA GPUs and NetApp storage, along with NVIDIA AI software.

- In 2025, Foxconn announced a strategic partnership with OpenAI. This partnership will see the development of large-scale AI data center infrastructure. Under this partnership, Foxconn will contribute to the manufacturing segment for the development of AI server components and other hardware needed for the development of AI.

Recent Product Development:

- In May 2025, Dell Technologies introduced the next-generation Dell PowerEdge XE Series built on the NVIDIA Blackwell GPU architecture. These systems support dense multi-GPU configurations and both air and liquid-cooled designs, enabling enterprises and hyperscale data centers to run large-scale AI training and inference workloads more efficiently.

- In March 2025, Supermicro introduced new direct liquid-cooled AI servers designed for the NVIDIA Blackwell GPU platform. These systems are built to support higher rack power densities and improved thermal efficiency, helping data centers deploy large GPU clusters for advanced AI training workloads.

- In November 2025, Supermicro introduced AI Factory cluster solutions that integrate GPU servers, networking, and AI software into rack-scale infrastructure. The solutions are designed to simplify large AI deployments and support clusters scaling from dozens to hundreds of GPUs in data center environments.

Market Segments' Analysis

|

Segmentations

|

List of Sub-Segments

|

Segments with High-Growth Opportunity

|

|

Form Factor-Type Analysis

|

Rack Servers, Blade Servers, and Tower Servers

|

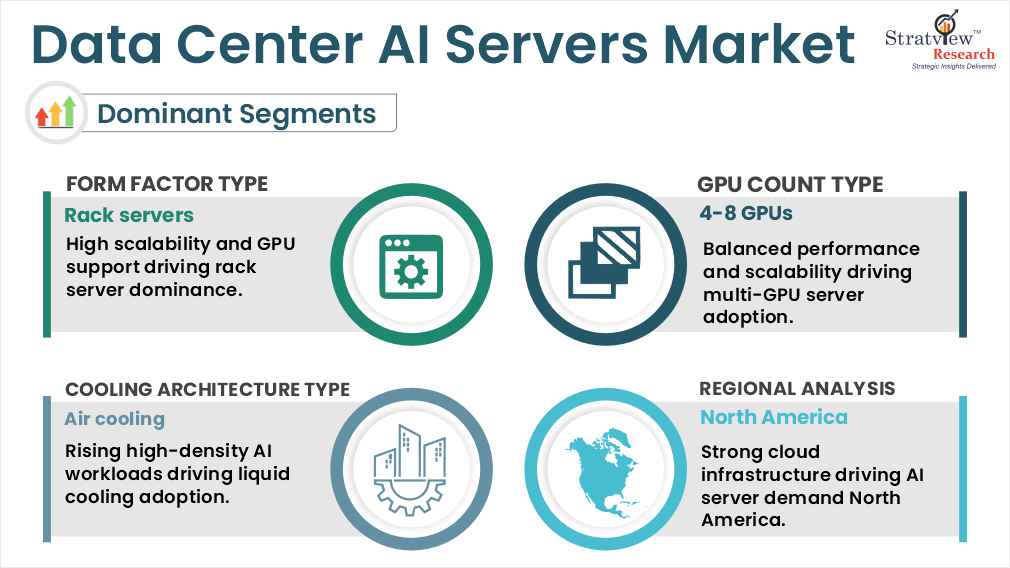

Rack servers dominate the market and are expected to maintain their leading position.

|

|

GPU Count-Type Analysis

|

1-2 GPUs, 2-4 GPUs, and 4-8 GPUs

|

Servers with 4-8 GPUs currently account for the largest share, while 2-4 GPU configurations are projected to grow at a faster pace as enterprise AI adoption increases.

|

|

Cooling Architecture-Type Analysis

|

Air Cooling and Liquid Cooling

|

Air cooling currently holds the largest share, though liquid cooling is anticipated to grow more rapidly to meet higher thermal and power density requirements.

|

|

Data-Center-Type Analysis

|

Hyperscale, Colocation, Enterprise, and Edge

|

Colocation data centers currently lead deployments, while hyperscale facilities are expected to grow faster, driven by large-scale AI workloads.

|

|

Region Analysis

|

North America, Europe, Asia-Pacific, and The Rest of the World

|

North America leads the market due to strong AI investments and cloud provider presence, while Asia-Pacific is expected to show the highest growth.

|

By Form Factor Type

“Rack servers dominate the market due to their high scalability, thermal management capabilities, and support for multiple GPU configurations. Blade servers and tower servers are used for niche deployments.”

The data center AI servers’ market, segmented by form factors such as rack, blade, and tower servers, is dominated by rack servers owing to their superior scalability and flexibility. Rack servers are widely preferred for hyperscale and enterprise AI deployments because they support high power-density configurations and advanced thermal management. Their modular design allows seamless integration of multiple GPUs and PCIe expansion cards, enabling efficient handling of complex AI workloads. Additionally, rack-based architectures simplify maintenance, optimize space utilization, and support rapid infrastructure scaling, making them the preferred choice for large-scale AI data center environments.

Blade servers and tower servers, while present in the market, cater primarily to niche deployments. Blade servers face limitations in thermal management, particularly when handling high-end GPU configurations that generate significant heat during intensive AI processing. This restricts their adoption in high-density AI environments. Similarly, tower servers lack the modularity and scalability required for multi-GPU deployments, making them less suitable for large AI workloads. Consequently, organizations prioritize rack servers for their performance efficiency, cooling capability, and ability to support evolving AI infrastructure demands, reinforcing their continued market dominance.

By GPU Count Type

“Servers with 4-8 GPUs are the most common, powering both training and inference, while smaller GPU configurations are increasingly adopted for lighter AI workloads.”

The data center AI servers’ market, segmented by GPU count type, such as 1-2 GPUs, 2-4 GPUs, and 4-8 GPUs. Servers equipped with four to eight GPUs dominate the data center AI servers’ market, as they provide the computational power required for intensive AI model training and large-scale deployments. These configurations strike an effective balance between performance, scalability, and power efficiency, making them suitable for hyperscale and enterprise environments. High-density GPU setups significantly reduce training time for complex models and support parallel processing of large datasets. Their flexibility to handle both training and inference workloads further strengthens adoption, reinforcing their position as the most widely deployed GPU configuration.

Smaller GPU configurations, including one to two GPUs and two to four GPUs, are gaining traction across enterprise and edge deployments. These setups are ideal for lighter AI workloads, inferencing tasks, and pilot implementations where full-scale computing power is unnecessary. Organizations benefit from lower costs, reduced power consumption, and easier deployment in space-constrained environments. As AI adoption expands across diverse use cases, these smaller configurations support incremental scaling strategies, complementing high-density servers and contributing to overall market growth.

By Cooling Architecture Type

“Air cooling is currently used in a majority of AI server deployments owing to its compatibility with existing data center infrastructure, whereas liquid cooling is gaining traction due to increasing AI workloads requiring higher power densities and efficient cooling.”

The data center AI servers market is segmented based on cooling architecture, which includes air cooling and liquid cooling architectures. Air Cooling is the dominant type of cooling architecture used in data centers, where AI servers are deployed, owing to its existing compatibility with data centers’ existing infrastructure. However, owing to increasing AI workloads requiring higher densities of GPUs, liquid cooling is becoming more popular in the data center AI servers market. Liquid Cooling is gaining traction owing to its ability to efficiently cool data centers’ existing infrastructure, whereas Air Cooling is dominating the data center AI servers market owing to its existing compatibility with data centers’ existing infrastructure.

Want to get more details about the segmentations? Register Here

By Data Center Type

“Colocation data centers currently account for a significant share of AI server deployments due to growing enterprise demand for outsourced infrastructure, while hyperscale data centers are witnessing the fastest growth as cloud providers expand AI computing capacity at scale.”

The data center AI servers market is segmented by data center type into hyperscale, colocation, enterprise, and edge. Colocation data centers currently hold a strong share of AI server deployments, as many enterprises prefer hosting high-performance infrastructure in third-party facilities that offer scalable power, cooling, and connectivity without the need to build their own data centers. Hyperscale facilities are the fastest-growing segment, driven by cloud providers expanding infrastructure to support generative AI, large language models, and other large-scale AI workloads. Enterprise data centers continue to adopt AI servers for internal analytics and application development, typically at a smaller scale, while edge data centers are gradually emerging to support low-latency inferencing and real-time data processing closer to end users.

By Region Type

“North America leads the Data Center AI Servers Market, driven by strong cloud infrastructure and early AI adoption, while Asia-Pacific demonstrates the fastest regional growth, fuelled by rapid data center expansion and increasing AI investments.

The data center AI servers’ market is segmented by region into North America, Europe, Asia-Pacific, and the Rest of the World. North America leads the data center AI servers’ market, supported by its strong cloud ecosystem, early adoption of artificial intelligence technologies, and significant investments from hyperscale providers. The region benefits from advanced data center infrastructure, high-performance computing demand, and continuous innovation in AI hardware and software. Major technology companies are actively expanding AI-ready facilities to support generative AI, analytics, and machine learning workloads. Additionally, strong research and development capabilities, coupled with enterprise digital transformation initiatives, continue to drive deployment of high-density AI servers, reinforcing North America’s dominant market position.

Asia-Pacific is emerging as the fastest-growing region in the data center AI servers market, fueled by the rapid expansion of data center capacity and rising AI investments across key economies. Countries such as China, Japan, and India are witnessing accelerated adoption of AI-driven applications, supported by government initiatives and increasing cloud deployments. Growing internet penetration, digital transformation, and demand for real-time analytics are encouraging enterprises to invest in AI-ready infrastructure. Meanwhile, Europe maintains steady growth through enterprise modernization, while other regions gradually expand adoption as digital infrastructure and colocation investments strengthen.